How to Build a Local AI Personal Assistant in 2026 (Ollama + DeepSeek + Open WebUI)

Written by

Sumit Patel

Published

May 1, 2026

Reading Level

Advanced Strategy

Investment

13 min read

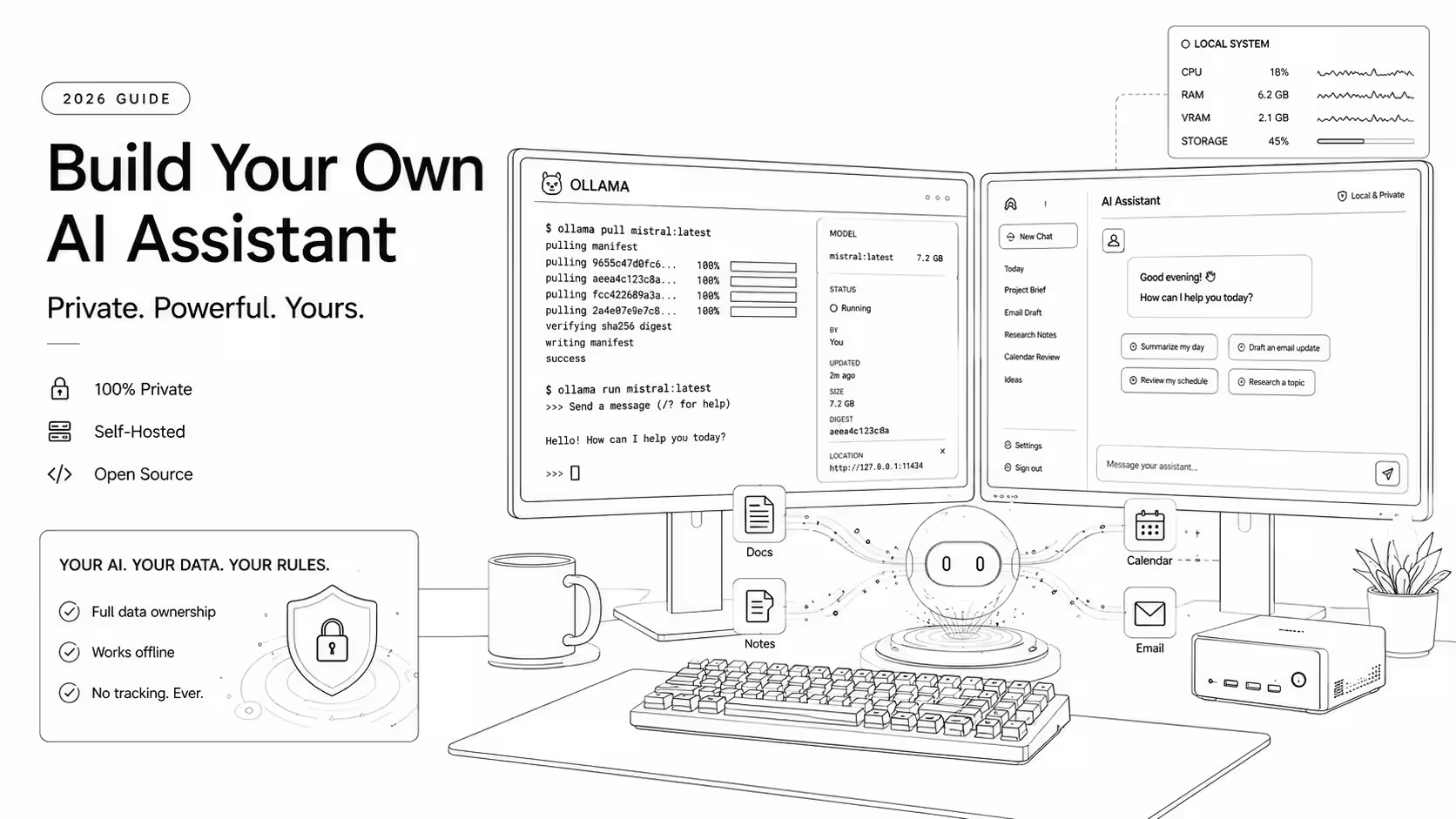

What You're Actually Building

- 1Runtime: Ollama — free, runs on Mac/Windows/Linux, serves models via local REST API.

- 2Model: Llama 4 8B (mid-range GPU) or DeepSeek-V3 quantized (24GB VRAM / Apple Silicon 36GB+).

- 3Interface: Open WebUI — free, runs in Docker, ChatGPT-style UI that connects to Ollama automatically.

- 4Data layer: Chroma (local vector store) + nomic-embed-text (local embeddings via Ollama) for document RAG.

- 5First use case: Document search and summarization — read-only, immediately testable, validates the entire stack.

- 6Cost after hardware: $0/month.

Why This Guide Exists and What It Actually Covers

Most 'build a local AI assistant' posts are one of two things: a shallow tutorial that stops at 'ollama run llama3' and calls it an assistant, or a graduate-level engineering post that assumes you're comfortable wiring RAG pipelines before breakfast. This is neither. It covers the decisions a working developer needs to make — which model and why, on what hardware, connected to which data, with which guardrails — in enough depth to implement without assuming you've done this before. Technical claims here are verified against current Ollama documentation, model papers, and community benchmarks as of May 2026. Where results will vary based on hardware or use case, I'll say so explicitly.

In 2026, building your own AI personal assistant is a practical productivity decision, not a weekend experiment for ML enthusiasts. The two things that changed: First, the open-source model quality crossed a meaningful threshold. DeepSeek-V3 and Llama 4 running locally on consumer hardware now produce output quality that 18 months ago required a paid API call. For document work, drafting, and code help — the primary use cases for a personal assistant — the gap between a good local model and GPT-4o is narrow on focused tasks. Second, the tooling to run them became genuinely accessible. Ollama handles model management and GPU acceleration. Open WebUI gives you a full chat interface in one Docker command. Chroma or Qdrant handle document retrieval without a machine learning background. The parts that used to take days now take hours. The reasons to build locally are also getting stronger, not weaker. Every query you send to ChatGPT, Claude, or Gemini is processed on a third-party server. Depending on your plan and their current policy, it may be logged, reviewed, or used for model training. For professional work — client documents, unreleased code, financial notes, legal context — that is a real consideration, not paranoia. This guide covers everything from zero to a working, useful local assistant: model selection with honest hardware requirements, the full Ollama + Open WebUI setup, connecting your documents via RAG, adding tool integrations, writing a system prompt that actually controls behavior, and the guardrails you need before trusting it with real work.

Key Takeaways

8 PointsWhat a Local AI Assistant Can and Cannot Do in 2026

Being clear about capabilities upfront saves time. A local assistant running on consumer hardware in 2026 can handle most daily knowledge work. It cannot do everything a frontier cloud model can.

Building Locally vs. Staying on Cloud: The Honest Tradeoff

This is not a religious debate. Both options are legitimate. Here is what each one actually gets you.

Choosing the Right Model for Your Hardware

The model choice determines whether your assistant feels fast and useful or slow and frustrating. Match the model to your hardware first, then optimize for capability within that constraint.

DeepSeek-V3: Best for Reasoning and Coding Tasks

DeepSeek-V3 is a Mixture-of-Experts (MoE) architecture. The total parameter count is 685B, but only ~37B parameters are active per forward pass — which is what actually determines inference speed and memory requirements. This distinction matters for hardware planning: the quantized version commonly used with Ollama (Q4_K_M) requires roughly 20–22GB of VRAM to run at usable speed.

In practice, DeepSeek-V3 produces noticeably better output than same-active-parameter alternatives on reasoning-heavy tasks: multi-step planning, code generation, data analysis, and structured reasoning chains. If your assistant's primary job is working through complex problems, reviewing non-trivial code, or synthesizing research, DeepSeek-V3 is the right model.

Hardware requirement: 24GB VRAM (NVIDIA RTX 4090 or equivalent) or Apple Silicon Mac with 36GB+ unified memory. Below that threshold, use a smaller model and don't force DeepSeek-V3 through CPU offloading — the inference speed will be too slow for daily use.

Llama 4: Best Generalist Choice for Most Hardware Ranges

Meta released Llama 4 in early 2026. It ships in multiple sizes: Scout (17B active/109B total MoE), Maverick (17B active/400B total MoE), and smaller dense variants. For practical local assistant use:

— Llama 4 8B dense: runs on 8GB VRAM at 20–35 tokens/second. Handles drafting, summarization, Q&A well. Not competitive with larger models on complex reasoning. — Llama 4 Scout (via Ollama): requires ~24GB VRAM in Q4 quantization. Strong all-around performance across writing, code, and reasoning.

Llama 4 also has the largest fine-tuning community of any open-source model family, which means specialized variants — instruction-tuned, coding-tuned, long-context — are available via Ollama's model registry.

Note: Llama 4's exact benchmark numbers relative to DeepSeek-V3 depend heavily on the task type. For coding and structured reasoning, DeepSeek-V3 has an edge. For general writing, summarization, and diverse task handling, Llama 4 is comparable.

Smaller Models When Speed Matters More Than Scale

For users with 8–12GB VRAM who need fast, responsive inference:

— Gemma 3 9B (Google): strong reasoning-per-parameter ratio, runs at 30–50 tokens/second on RTX 3060/4060 class hardware. Good for note search, summarization, light drafting. — Phi-4 14B (Microsoft): exceptional reasoning for its size. Requires ~10GB VRAM in Q4. Best small model for coding tasks. — Mistral Small 22B: well-rounded, requires 14–16GB VRAM in Q4. Good middle ground if you have 16GB VRAM.

All of these are pulled with a single Ollama command. You can switch between models in under a minute, so don't overthink the initial choice. Try one, run it for a week, switch if needed.

The Practical Recommendation by Hardware

Step 1: Install Ollama and Get a Model Running

Ollama handles model downloading, file management, GPU acceleration, and serves a local REST API compatible with the OpenAI API format. It is the right foundation — it abstracts everything that used to require manual CUDA configuration, environment setup, and model-specific inference scripts.

Step 2: Connect Your Documents with RAG

A model that answers general questions is a local ChatGPT. What makes it a personal assistant is giving it context about your specific work — your notes, documents, decisions, and project knowledge. That requires RAG (Retrieval Augmented Generation).

How RAG works: your documents are chunked into pieces, each chunk is converted into a vector (a numerical representation of its meaning) by an embedding model, and stored in a vector database. When you ask a question, the same embedding model converts your question into a vector, the database finds the document chunks most similar in meaning, and those chunks are injected into the model's context window before it generates a response. The model reads the relevant parts of your documents at query time — it doesn't need to memorize them.

Option 1: Use Open WebUI's Built-In RAG (Easiest Path)

Open WebUI has document upload and RAG support built in. You don't need to write a single line of Python to get started.

Setup: Settings → Documents → configure your embedding model (select nomic-embed-text — this is a local embedding model you pull via Ollama, no external API needed).

Option 2: Python-Based RAG with Chroma (More Control)

For indexing larger document collections and having full control over chunking, embedding, and retrieval behavior:

What to Connect and What Not To

Read-only sources to start with: — A specific folder of PDFs (research papers, client reference docs, project notes) — Your Obsidian vault or exported Notion pages as markdown — Meeting notes in text or markdown format — Personal knowledge base content

Add later, with care: — Calendar (read-only via iCal export or Google Calendar API) — Email (start with a specific label or folder, never your full inbox) — Browser reading list exports

Do not connect: — Your entire filesystem — Password managers, credential files, .env files — Financial accounts — Anything with write access before you've verified retrieval is working correctly for weeks

The read-only-first discipline is the most important safety pattern in this guide. Every write permission is a risk surface.

Step 3: Write a System Prompt That Actually Controls Behavior

The system prompt is the invisible configuration layer that turns a general model into your specific assistant. Most local AI setups treat it as an afterthought. The best setups treat it as the most important single decision in the build.

A system prompt that actually works has five components:

Step 4: Add Tools and Automation (Carefully)

Tools let the assistant take actions beyond just answering questions — searching files, reading your calendar, creating reminders. Every tool you add is also a risk surface. The discipline here is incremental: add one tool, test it thoroughly, then consider the next.

Read-Only Tools First

Your first-generation tools should only retrieve information, never modify anything.

Action Tools — Add One at a Time

Once read-only tools are verified working for 1–2 weeks:

Step 5: Test Before You Trust It

Before using your assistant for real work, systematically test how it fails. Most AI assistant failures are predictable and fall into four categories.

What to Build First: Three Starting Projects

The biggest mistake in local assistant builds is trying to build everything at once. Start with one narrow use case, get it working correctly, then expand.

The Full Stack: What a Production-Ready Setup Looks Like

Here is the complete architecture for a well-configured local AI personal assistant as of May 2026.

Privacy: Verifying It's Actually Local

A local assistant is only private if you verify it's behaving locally. Configuration mistakes can route data through unexpected external services.

Verify Ollama Makes No Outbound Calls During Inference

Ollama should only make outbound network calls when you explicitly run 'ollama pull' to download a model. During inference (answering your questions), it should make zero outbound connections.

Check Open WebUI for External Calls

Open WebUI runs in Docker and should not make external API calls unless you've explicitly configured them (like a Tavily search key or an external Ollama endpoint). Review your Docker container's network settings and check the Open WebUI settings panel for any external integrations you didn't intend to enable.

Separate Sensitive Documents

If you work with genuinely sensitive material — documents under NDA, client financial data, health information — keep it in a separate Chroma collection rather than your main index. Create a 'sensitive' knowledge base in Open WebUI that you activate explicitly for specific sessions, rather than mixing it into your general document index. This limits the blast radius if a configuration mistake accidentally exposes context.

Strategic Summary

Final Thoughts

Building a local AI personal assistant in 2026 is not an advanced engineering project — it is a practical afternoon project for any developer who can run a Docker container and edit a Python script. Ollama, Open WebUI, and Chroma have reduced the hard parts to mostly solved problems. The remaining work — choosing what data to connect, writing a good system prompt, adding tools incrementally, and testing before trusting — is the kind of thoughtful configuration work that produces an assistant actually shaped to your workflow, rather than a generic product built for an average user. The path is clear: install Ollama, pull one model, open Open WebUI, index one document folder, ask one question about something you actually want to know. That first correct, private answer — generated entirely on your hardware — is when the real value becomes obvious. From there: one new data source, one refined system prompt iteration, one new tool — at whatever pace your use cases demand, with no vendor telling you what the assistant can or can't do.

Install Ollama this weekend. Pull one model. Index one document folder. Ask it a real question. The setup takes under two hours. That first interaction is worth more than any amount of reading about local AI.

Building internal tools, ERP modules, or CRM systems and need senior React/TypeScript engineering? Work With Me → stacknovahq.com/work-with-me

Next up